Note: this page is available currently for review purposes only. Our Google Cloud Marketplace offering is not yet available to the public

Using gdotv on Google Cloud Marketplace

gdotv is available as a Kubernetes application on the Google Cloud Marketplace at https://console.cloud.google.com/marketplace/product/gdotv-public/gdotv-developer.

It's a graph database client, perfect for developers looking to start on a graph project or support an existing one. It is compatible with Google Cloud Spanner Graph, Amazon Neptune, Neo4j, Memgraph, FalkorDB, JanusGraph, Gremlin Server, Dgraph and many more graph databases.

We provide state of the art development tools with advanced autocomplete, syntax checking and graph visualization.

With gdotv you can:

- View your graph database's schema in 1 click

- Write and run Gremlin, Cypher, SPARQL, GQL and DQL queries against your database

- Visualize query results across a variety of formats such as graph visualization, JSON and tables

- Explore your data interactively with our no-code graph database browser

- Debug Gremlin queries step by step, and access profiling tools for Gremlin and Cypher

It is deployed on a GKE cluster via Terraform, running five containerized services behind a LoadBalancer with TLS enabled by default.

Deploying a gdotv instance

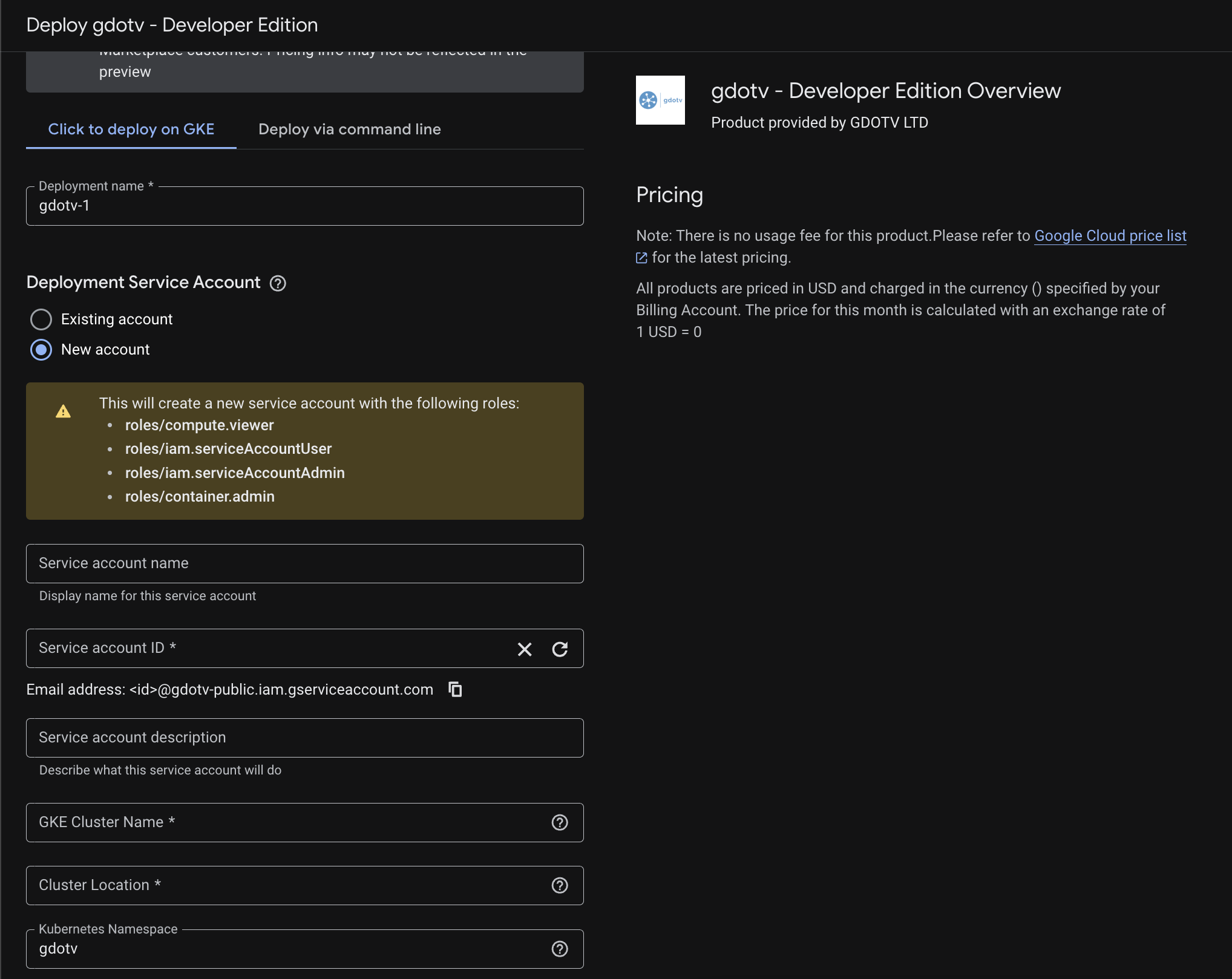

To deploy gdotv from the Google Cloud Marketplace, follow these steps:

- Navigate to the gdotv Google Cloud Marketplace listing

- Click Configure

- Select or create a GCP project for the deployment

- Configure the deployment parameters:

- GKE Cluster Name: Name of an existing GKE cluster, or a name for a new one if you check Create New Cluster

- Cluster Location: The GCP region or zone for your cluster (e.g.

us-central1oreurope-west2) - Namespace: The Kubernetes namespace to deploy into (default:

gdotv) - Hostname (optional): A custom domain name for your gdotv instance. Leave empty to auto-detect the LoadBalancer IP address

- Click Deploy

The deployment process is fully automatic. If no hostname is provided, gdotv will:

- Deploy all infrastructure components (nginx, Keycloak, PostgreSQL databases)

- Wait for the LoadBalancer to be assigned an external IP

- Configure the application with the detected IP and generate a self-signed TLS certificate

- Start the gdotv application

This process typically takes 5-10 minutes depending on cluster provisioning time.

Pricing

gdotv on Google Cloud Marketplace is usage-based. Charges are billed through your GCP account. For pricing details, refer to the Marketplace listing page.

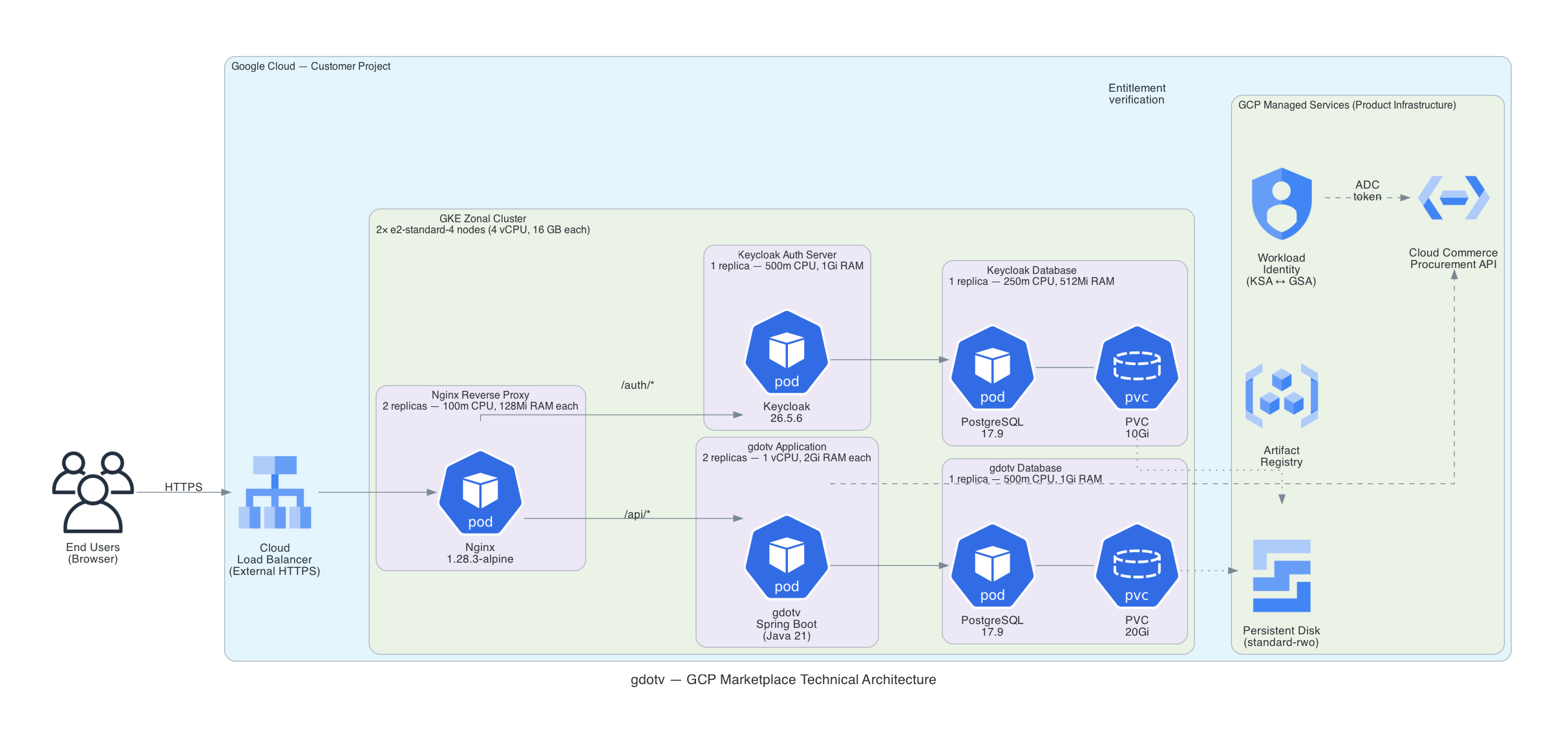

Architecture

gdotv is deployed as a set of Kubernetes workloads on GKE. The deployment includes five containers managed by Helm:

- gdotv-developer: The gdotv web application (Spring Boot + Vue.js)

- gdotv-keycloak: A Keycloak instance providing user authentication, federation and SSO capabilities

- gdotv-postgres: A PostgreSQL database storing gdotv application data

- gdotv-keycloak-postgres: A PostgreSQL database storing Keycloak configuration and realm data

- gdotv-nginx: An NGINX reverse proxy fronting the application over port 443, with TLS enabled by default

The architecture of the application is as shown below:

Sizing

The GKE node pool should be provisioned with sufficient resources to run all five containers. The following table provides sizing guidance:

| Node Machine Type | Concurrent Users | Notes |

|---|---|---|

| e2-standard-4 | Up to 10 users | Cost-efficient, suitable for evaluation and dev |

| n2-standard-4 | Up to 10 users | Recommended for most teams |

| n2-standard-8 | Up to 20 users | For larger teams |

| n2-standard-16 | Over 20 users | For enterprise deployments |

| n2-highmem-4 | Up to 10 users | Memory-optimized, for large graph datasets |

| n2-highmem-8 | Up to 20 users | Memory-optimized, for larger teams with large graphs |

| n2-highmem-16 | Over 20 users | Memory-optimized, for enterprise deployments |

The N2 family is recommended over E2 for production workloads as it provides dedicated CPU resources without sharing. The N2-highmem (memory-optimized) is recommended when working with large graph datasets that require more memory per node. The C3 family (latest generation) is also available for compute-intensive workloads.

For production use, we recommend at least 2 nodes with autoscaling enabled for high availability.

Connecting to your GKE cluster

To run kubectl or helm commands against your gdotv deployment, you need to authenticate to the GKE cluster first.

Prerequisites

Install the following CLI tools if you haven't already:

- gcloud CLI

- kubectl

- helm (for Helm operations)

Authenticate with gcloud

Log in to your GCP account:

gcloud auth loginSet your project:

gcloud config set project <PROJECT_ID>Fetch cluster credentials

This configures kubectl to connect to your GKE cluster:

gcloud container clusters get-credentials <CLUSTER_NAME> \

--region <REGION> \

--project <PROJECT_ID>For example:

gcloud container clusters get-credentials gdotv-test \

--region europe-west2 \

--project gdotv-publicVerify connectivity

kubectl get pods -n gdotvYou should see the gdotv pods listed with their status.

Authenticate Helm with Artifact Registry

If you need to run helm upgrade commands against the gdotv chart, authenticate Helm with the OCI registry first:

gcloud auth print-access-token | helm registry login us-docker.pkg.dev \

--username oauth2accesstoken --password-stdinThis token is short-lived and will need to be refreshed if it expires.

Accessing gdotv

Once the deployment is complete, you can access gdotv at the URL shown in the Terraform outputs. If no custom hostname was configured, this will be the LoadBalancer's external IP address.

To retrieve the access URL:

terraform output gdotv_urlOr find the external IP directly:

kubectl get svc -n gdotv -l app.kubernetes.io/component=nginxNavigate to https://<EXTERNAL_IP> in your browser. Since gdotv uses a self-signed TLS certificate by default, you will see a browser warning. Click Advanced, then Proceed to continue.

Authenticating to gdotv

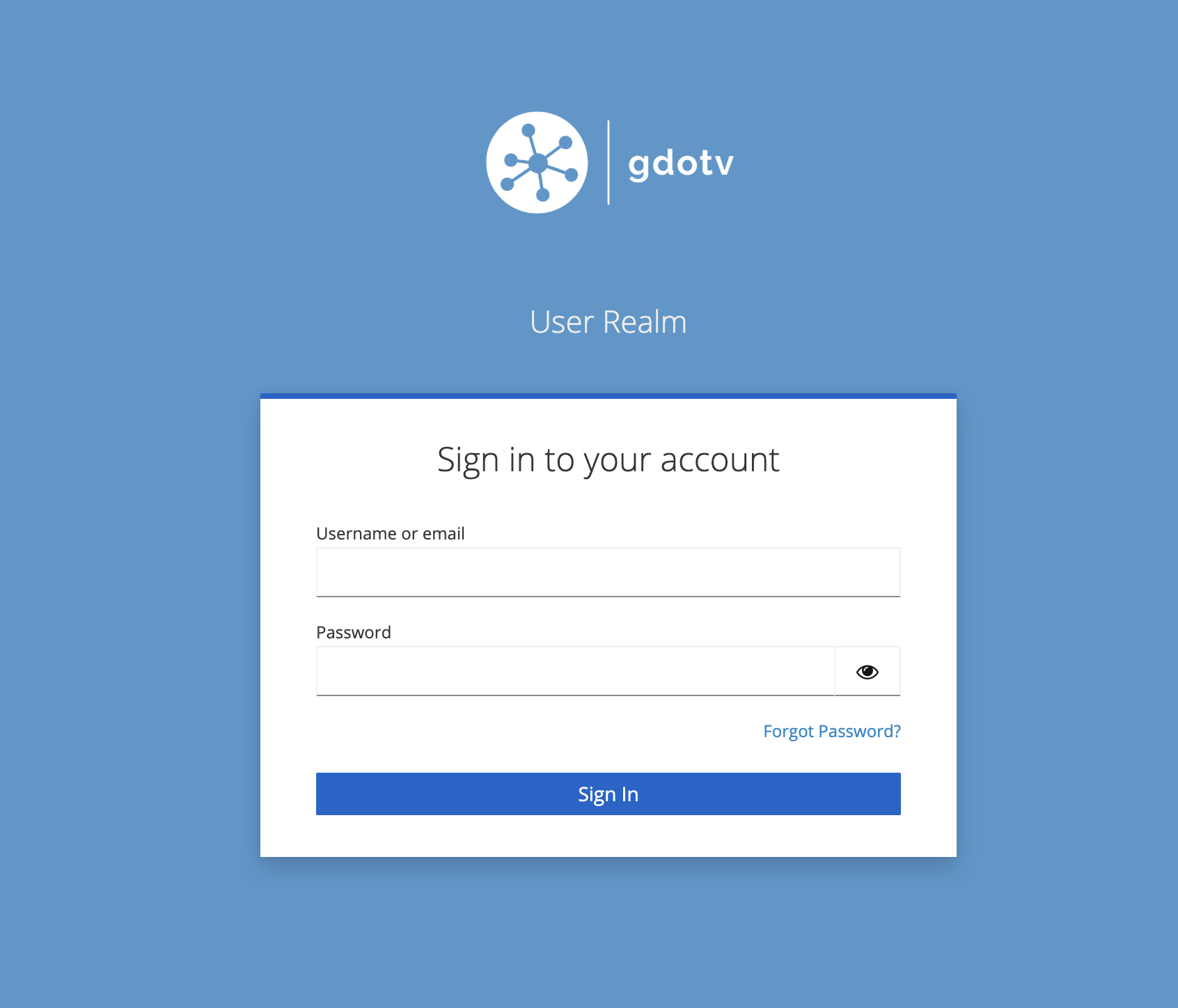

gdotv uses Keycloak for authentication. On first deployment, a default user is created automatically:

- Username:

gdotv - Password: Auto-generated during deployment

To retrieve the default user password:

terraform output -raw gdotv_user_passwordWhen navigating to gdotv while unauthenticated, you will be presented with the Keycloak login screen:

We recommend changing the default password after your first login. To do so, click on the username in the top-right menu bar, then select Change Password. You will be redirected to the Keycloak profile management page where you can update your password.

Authenticating to the gdotv Keycloak realm

The gdotv Keycloak realm is where all gdotv users are stored and managed. New authentication flows, such as Single Sign On, can be configured from the gdotv Keycloak realm admin console.

The gdotv Keycloak realm admin console can be accessed at:

https://<HOSTNAME>/kc/admin/gdotv/console/The default realm admin credentials are:

- Username:

gdotv - Password: Same as the default gdotv user password (see above)

Authenticating to the master Keycloak realm

The master Keycloak realm provides access to the master administration interface of Keycloak. Under normal circumstances, it should rarely need to be accessed. However, we recommend logging in after initial deployment to change the master admin password.

The master Keycloak realm admin console can be accessed at:

https://<HOSTNAME>/kc/admin/master/console/To retrieve the master admin credentials:

terraform output -raw keycloak_admin_passwordThe default master admin username is admin.

Configuring a TLS certificate

By default, gdotv uses a self-signed certificate to serve its web interface over HTTPS. You may wish to configure your own trusted certificate against a domain name that you own.

WARNING

When changing the hostname, you must also update the TLS certificate in the same helm upgrade command. Running separate helm upgrade commands will cause values to be overwritten due to how --reuse-values works.

Renewing or replacing the certificate for the current hostname

To renew an expiring self-signed certificate or replace it with a CA-signed certificate for the hostname currently configured on your deployment:

HOSTNAME="<your-current-hostname>"

# Generate a self-signed certificate (or use your CA-issued .crt and .key files)

openssl req -x509 -nodes -days 3650 -newkey rsa:2048 \

-keyout tls.key \

-out tls.crt \

-subj "/CN=gdotv/O=gdotv" \

-addext "subjectAltName=DNS:${HOSTNAME}"

TLS_CRT=$(base64 -i tls.crt | tr -d '\n')

TLS_KEY=$(base64 -i tls.key | tr -d '\n')

helm upgrade gdotv-developer oci://us-docker.pkg.dev/gdotv-public/gdotv-developer/gdotv \

--namespace gdotv \

--reuse-values \

--wait \

--set nginx.tls.certificate="$TLS_CRT" \

--set nginx.tls.privateKey="$TLS_KEY"

kubectl rollout restart deployment/gdotv-developer-nginx -n gdotvConfirm the new certificate is active:

echo | openssl s_client -connect <HOSTNAME>:443 2>/dev/null | openssl x509 -noout -datesUsing a Kubernetes TLS secret for the current hostname

Alternatively, you can store your certificate as a Kubernetes TLS secret:

- Create a TLS secret in your gdotv namespace:

kubectl create secret tls my-tls-cert \

-n gdotv \

--cert=path/to/tls.crt \

--key=path/to/tls.key- Update the Helm release to use the secret:

helm upgrade gdotv-developer oci://us-docker.pkg.dev/gdotv-public/gdotv-developer/gdotv \

--namespace gdotv \

--reuse-values \

--wait \

--set nginx.tls.existingSecret=my-tls-cert

kubectl rollout restart deployment/gdotv-developer-nginx -n gdotvReverting to the default self-signed certificate

To revert to a self-signed certificate (e.g. after removing a custom domain), generate one for the current LoadBalancer IP and apply it alongside the hostname change:

# Get the current LoadBalancer IP

IP=$(kubectl get svc gdotv-developer-nginx -n gdotv -o jsonpath='{.status.loadBalancer.ingress[0].ip}')

# Generate a self-signed certificate for the IP

openssl req -x509 -nodes -days 365 -newkey rsa:2048 \

-keyout tls.key -out tls.crt \

-subj "/CN=$IP" -addext "subjectAltName=IP:$IP"

TLS_CRT=$(base64 -i tls.crt | tr -d '\n')

TLS_KEY=$(base64 -i tls.key | tr -d '\n')

# Revert hostname to the IP and apply the new cert in a single command

helm upgrade gdotv-developer oci://us-docker.pkg.dev/gdotv-public/gdotv-developer/gdotv \

--namespace gdotv \

--reuse-values \

--wait \

--set gdotv.env.hostname="$IP" \

--set nginx.tls.existingSecret="" \

--set nginx.tls.certificate="$TLS_CRT" \

--set nginx.tls.privateKey="$TLS_KEY"

kubectl rollout restart deployment/gdotv-developer-keycloak -n gdotv

kubectl rollout restart deployment/gdotv-developer -n gdotv

kubectl rollout restart deployment/gdotv-developer-nginx -n gdotvConfiguring a custom hostname

If you deployed gdotv without a hostname (using the auto-detected LoadBalancer IP) and later want to configure a custom domain:

TIP

If you have an active session on the old hostname, your browser may have cached session data that causes redirects to the old address. We recommend logging out of gdotv before changing the hostname, or testing the new hostname in a private/incognito browser window.

Hostname and TLS certificate persistence

When gdotv auto-detects the LoadBalancer IP during deployment, the hostname and TLS certificate are persisted in a Kubernetes ConfigMap. This persistence means:

- Hostname survives pod restarts and node scaling events

- TLS certificate remains valid across the lifetime of your deployment

- You can safely update your deployment without losing the auto-detected configuration

Changing from auto-detected to custom hostname

- Fetch the LoadBalancer IP of your gdotv deployment:

kubectl get svc gdotv-developer-nginx -n gdotv -o jsonpath='{.status.loadBalancer.ingress[0].ip}'Create a DNS record (A record) on your domain manager of choice, pointing your domain (e.g.

gdotv.example.com) to the LoadBalancer IP.Update the hostname and TLS certificate together in a single

helm upgradecommand. Disable hostname auto-detection so that gdotv and Keycloak use your custom hostname instead of the auto-detected one:

With a self-signed certificate:

HOSTNAME="gdotv.example.com"

openssl req -x509 -nodes -days 3650 -newkey rsa:2048 \

-keyout tls.key -out tls.crt \

-subj "/CN=gdotv/O=gdotv" \

-addext "subjectAltName=DNS:${HOSTNAME}"

TLS_CRT=$(base64 -i tls.crt | tr -d '\n')

TLS_KEY=$(base64 -i tls.key | tr -d '\n')

helm upgrade gdotv-developer oci://us-docker.pkg.dev/gdotv-public/gdotv-developer/gdotv \

--namespace gdotv \

--reuse-values \

--wait \

--set gdotv.hostnameDetect.enabled=false \

--set gdotv.env.hostname="$HOSTNAME" \

--set nginx.tls.certificate="$TLS_CRT" \

--set nginx.tls.privateKey="$TLS_KEY"With a Kubernetes TLS secret:

kubectl create secret tls my-tls-cert \

-n gdotv \

--cert=path/to/tls.crt \

--key=path/to/tls.key

helm upgrade gdotv-developer oci://us-docker.pkg.dev/gdotv-public/gdotv-developer/gdotv \

--namespace gdotv \

--reuse-values \

--wait \

--set gdotv.hostnameDetect.enabled=false \

--set gdotv.env.hostname=gdotv.example.com \

--set nginx.tls.existingSecret=my-tls-cert- Restart all services to pick up the changes (Keycloak first, then gdotv):

kubectl rollout restart deployment/gdotv-developer-keycloak -n gdotv

kubectl rollout restart deployment/gdotv-developer -n gdotv

kubectl rollout restart deployment/gdotv-developer-nginx -n gdotvThe key difference is the --set gdotv.hostnameDetect.enabled=false flag, which ensures that gdotv and Keycloak read your custom hostname from the Helm values rather than from the auto-detected ConfigMap.

Upgrading to a new version

gdotv receives frequent updates with new features and improvements. Upgrading only updates the gdotv application container. Keycloak, nginx and the databases are unaffected.

helm upgrade gdotv-developer oci://us-docker.pkg.dev/gdotv-public/gdotv-developer/gdotv \

--namespace gdotv \

--reuse-values \

--wait \

--set gdotv.image.tag=<NEW_VERSION>--reuse-values preserves all previously set values (passwords, hostname, certificates) so they do not need to be passed again.

Monitor the rollout:

kubectl rollout status deployment/gdotv-developer -n gdotvIf the new version fails to start, roll back to the previous release:

helm rollback gdotv-developer -n gdotvBackup and Restore

Recommended: Backup for GKE

For production deployments, we recommend using Backup for GKE, a managed GCP service that provides scheduled, namespace-scoped backups of both Kubernetes resources (configs, secrets) and persistent volume data. This is the most robust approach as it captures the full state of the gdotv namespace, including both PostgreSQL data volumes and all Kubernetes secrets.

To set up Backup for GKE:

- Enable the Backup for GKE API on your project:

gcloud services enable gkebackup.googleapis.com --project=<PROJECT_ID>- Enable the Backup for GKE add-on on your cluster:

gcloud container clusters update <CLUSTER_NAME> \

--region=<REGION> \

--update-addons=BackupRestore=ENABLED \

--project=<PROJECT_ID>- Create a backup plan targeting the

gdotvnamespace:

gcloud beta container backup-restore backup-plans create gdotv-backup-plan \

--project=<PROJECT_ID> \

--location=<REGION> \

--cluster=projects/<PROJECT_ID>/locations/<REGION>/clusters/<CLUSTER_NAME> \

--selected-namespaces=gdotv \

--include-volume-data \

--cron-schedule="0 2 * * *" \

--backup-retain-days=30This creates a daily backup at 2:00 AM with 30-day retention. Adjust the schedule and retention to suit your requirements.

To restore from a backup, follow the Backup for GKE restore documentation.

Manual backup with pg_dump

For one-off backups or environments where Backup for GKE is not available, you can back up the PostgreSQL databases directly. gdotv uses two PostgreSQL instances: one for the application (gdotv-developer-gdotv-postgres) and one for Keycloak (gdotv-developer-keycloak-postgres).

Back up the gdotv application database:

kubectl exec -n gdotv statefulset/gdotv-developer-gdotv-postgres -- \

pg_dump -U postgres --clean --if-exists postgres \

> gdotv-backup-$(date +%Y%m%d-%H%M%S).sqlBack up the Keycloak database:

kubectl exec -n gdotv statefulset/gdotv-developer-keycloak-postgres -- \

pg_dump -U postgres --clean --if-exists postgres \

> keycloak-backup-$(date +%Y%m%d-%H%M%S).sqlRestore the gdotv application database:

WARNING

Restoring overwrites current data. Stop the dependent application pod first to avoid write conflicts.

# Scale down the application before restoring

kubectl scale deployment/gdotv-developer -n gdotv --replicas=0

# Restore

cat gdotv-backup-<timestamp>.sql | kubectl exec -i -n gdotv \

statefulset/gdotv-developer-gdotv-postgres -- \

psql -U postgres postgres

# Scale back up

kubectl scale deployment/gdotv-developer -n gdotv --replicas=2Restore the Keycloak database:

# Scale down Keycloak before restoring

kubectl scale deployment/gdotv-developer-keycloak -n gdotv --replicas=0

# Restore

cat keycloak-backup-<timestamp>.sql | kubectl exec -i -n gdotv \

statefulset/gdotv-developer-keycloak-postgres -- \

psql -U postgres postgres

# Scale back up

kubectl scale deployment/gdotv-developer-keycloak -n gdotv --replicas=1Stop / Start Services

Stop all services (scale to zero)

This preserves PVCs and Secrets — data is not lost.

kubectl scale deployment/gdotv-developer -n gdotv --replicas=0

kubectl scale deployment/gdotv-developer-keycloak -n gdotv --replicas=0

kubectl scale deployment/gdotv-developer-nginx -n gdotv --replicas=0

kubectl scale statefulset/gdotv-developer-gdotv-postgres -n gdotv --replicas=0

kubectl scale statefulset/gdotv-developer-keycloak-postgres -n gdotv --replicas=0Start all services

Start order matters: databases first, then Keycloak, then gdotv, then nginx.

kubectl scale statefulset/gdotv-developer-gdotv-postgres -n gdotv --replicas=1

kubectl scale statefulset/gdotv-developer-keycloak-postgres -n gdotv --replicas=1

kubectl scale deployment/gdotv-developer-keycloak -n gdotv --replicas=1

kubectl scale deployment/gdotv-developer -n gdotv --replicas=2

kubectl scale deployment/gdotv-developer-nginx -n gdotv --replicas=2Restart a single service

kubectl rollout restart deployment/gdotv-developer -n gdotv

kubectl rollout restart deployment/gdotv-developer-keycloak -n gdotv

kubectl rollout restart deployment/gdotv-developer-nginx -n gdotvWatch rollout progress:

kubectl rollout status deployment/gdotv-developer -n gdotvCheck Logs

gdotv application

# Live logs

kubectl logs -f -n gdotv deployment/gdotv-developer

# Logs from the previous (crashed) container

kubectl logs -n gdotv deployment/gdotv-developer --previousKeycloak

kubectl logs -f -n gdotv deployment/gdotv-developer-keycloaknginx

kubectl logs -f -n gdotv deployment/gdotv-developer-nginxBootstrap job

kubectl get pods -n gdotv -l app.kubernetes.io/component=bootstrap

kubectl logs -n gdotv -l app.kubernetes.io/component=bootstrapAll pods

kubectl get pods -n gdotv -l app.kubernetes.io/instance=gdotv-developerPod events (useful for crash diagnosis)

kubectl describe pod -n gdotv -l app.kubernetes.io/component=gdotv-appScaling

Scale the gdotv application

The gdotv application is stateless (session state is stored in PostgreSQL). It can safely run multiple replicas.

# Via kubectl

kubectl scale deployment/gdotv-developer -n gdotv --replicas=3

# Or persistently via helm upgrade

helm upgrade gdotv-developer oci://us-docker.pkg.dev/gdotv-public/gdotv-developer/gdotv \

--namespace gdotv \

--reuse-values \

--wait \

--set gdotv.replicaCount=3Scale nginx

nginx is stateless and can be scaled freely:

kubectl scale deployment/gdotv-developer-nginx -n gdotv --replicas=3Keycloak and databases

Keycloak and both PostgreSQL instances run as single replicas. Scaling them requires additional configuration (Keycloak clustering, PostgreSQL replication) that is outside the scope of this deployment. For production HA requirements, consider using Cloud SQL for PostgreSQL and a Keycloak Operator.

Cluster-level scaling

If pods cannot be scheduled due to insufficient node resources:

gcloud container clusters resize <CLUSTER_NAME> \

--region <REGION> \

--num-nodes 3 \

--project <PROJECT_ID>GCP: Workload Identity for Google Cloud Connectors

To connect gdotv to Google Cloud services (Cloud Spanner Graph, BigQuery) without service account key files, use Workload Identity. This links the Kubernetes Service Account used by the gdotv pod to a Google Service Account (GSA), providing short-lived credentials automatically.

Prerequisites

Verify Workload Identity is enabled on the cluster:

gcloud container clusters describe <CLUSTER_NAME> \

--region=<REGION> \

--format="value(workloadIdentityConfig.workloadPool)"If the output is empty, enable it:

gcloud container clusters update <CLUSTER_NAME> \

--region=<REGION> \

--workload-pool=<PROJECT_ID>.svc.id.goog

gcloud container node-pools update <NODE_POOL_NAME> \

--cluster=<CLUSTER_NAME> \

--region=<REGION> \

--workload-metadata=GKE_METADATAStep 1 - Create a Google Service Account

gcloud iam service-accounts create gdotv-backend \

--project=<PROJECT_ID> \

--display-name="gdotv Backend"Step 2 - Grant permissions

Add only the roles required for the Google Cloud services gdotv needs to connect to:

# Cloud Spanner Graph

gcloud projects add-iam-policy-binding <PROJECT_ID> \

--member="serviceAccount:gdotv-backend@<PROJECT_ID>.iam.gserviceaccount.com" \

--role="roles/spanner.databaseUser"

# BigQuery (if needed)

gcloud projects add-iam-policy-binding <PROJECT_ID> \

--member="serviceAccount:gdotv-backend@<PROJECT_ID>.iam.gserviceaccount.com" \

--role="roles/bigquery.dataViewer"Cross-project access

If your Spanner Graph or BigQuery instances are in a different GCP project than the one hosting gdotv, grant the roles on the target project instead:

# Grant Spanner access on the target project

gcloud projects add-iam-policy-binding <TARGET_PROJECT_ID> \

--member="serviceAccount:gdotv-backend@<GDOTV_PROJECT_ID>.iam.gserviceaccount.com" \

--role="roles/spanner.databaseUser"The GSA (gdotv-backend@<GDOTV_PROJECT_ID>) lives in the gdotv project, but the IAM binding is added to the project where the database resides. The same applies to BigQuery roles.

Step 3 - Bind the KSA to the GSA

gcloud iam service-accounts add-iam-policy-binding \

gdotv-backend@<PROJECT_ID>.iam.gserviceaccount.com \

--role="roles/iam.workloadIdentityUser" \

--member="serviceAccount:<PROJECT_ID>.svc.id.goog[gdotv/gdotv-developer]"Step 4 - Annotate the Kubernetes Service Account

If you haven't already, make sure you have authenticated to your GKE cluster by following the instructions in Connecting to your GKE cluster.

kubectl annotate serviceaccount gdotv-developer -n gdotv \

iam.gke.io/gcp-service-account=gdotv-backend@<PROJECT_ID>.iam.gserviceaccount.com \

--overwriteRestart the gdotv pod to pick up the annotation:

kubectl rollout restart deployment/gdotv-developer -n gdotvStep 5 - Verify

kubectl exec -n gdotv deployment/gdotv-developer -- \

curl -s "http://metadata.google.internal/computeMetadata/v1/instance/service-accounts/default/email" \

-H "Metadata-Flavor: Google"

# Expected: gdotv-backend@<PROJECT_ID>.iam.gserviceaccount.comUninstalling

To completely remove gdotv from your cluster:

helm uninstall gdotv-developer -n gdotv

kubectl delete namespace gdotvTo also destroy the GKE cluster (if it was created by Terraform):

cd gdotv-k8s/terraform

terraform destroyTroubleshooting

The application is not accessible over HTTPS

- Check that the LoadBalancer has an external IP assigned:

kubectl get svc -n gdotv - Verify the GKE firewall rules allow inbound traffic on port 443

- Check that all pods are running:

kubectl get pods -n gdotv

gdotv pods are in CrashLoopBackOff

This typically indicates a hostname or Keycloak connectivity issue. Check the logs:

kubectl logs -n gdotv deployment/gdotv-developerCommon causes:

- Hostname mismatch between the configured hostname and the actual service endpoint

- Keycloak not fully ready when gdotv starts (init containers should handle this, but check events)

Pods stuck in Pending state

The cluster may not have sufficient node resources. Check events:

kubectl get events -n gdotv --sort-by='.lastTimestamp' | tail -20If nodes are the issue, scale the node pool:

gcloud container clusters resize <CLUSTER_NAME> \

--region <REGION> \

--num-nodes 3 \

--project <PROJECT_ID>Bootstrap job failed

The bootstrap job creates the initial Keycloak user. If it failed, check its logs:

kubectl logs -n gdotv -l app.kubernetes.io/component=bootstrapYou can re-trigger it by running a Helm upgrade:

helm upgrade gdotv-developer oci://us-docker.pkg.dev/gdotv-public/gdotv-developer/gdotv \

--namespace gdotv \

--reuse-valuesAdditional support

For any support queries, email us at support@gdotv.com. Support is free and we answer all queries within one business day.