The Weekly Edge: 25 Years of the Semantic Web, GQL DB, Lance Graph & More

Whew! I just got back from a week-long bonanza at the Knowledge Graph Conference where I got to meet so many of your beautiful faces including a few Weekly Edge readers who noted they love my jokes (you flatter, they’re rubbish).

This edition of the Weekly Edge has got your regular graph tech news roundup but with a touch of tech history. Here are this week’s headlines:

- A silver jubilee: The Semantic Web turns 25 this month – and its three co-authors were recognized with long-overdue Lifetime Achievement Awards

- Got GQL fever? Ultipa GQLDB is the world’s first 100% ISO GQL graph database, and now you can try it for free

- A gathering of Gremlins: Contributors break down the Apache TinkerPop 4.0.0-beta.2 release and other post-release highlights

- Help your poor agent with Alzheimer’s: Here’s how to improve AI memory using AWS Neptune and Mem0

- There’s a new graph query engine in town: Lance Graph is here to bring together your graph database, vector store, and data lakehouse

If you’re new here, the Weekly Edge is your weekly tl;dr of graph technology news curated by the team at gdotv, giving you all the reads, repos, vids, and walkthroughs worth exploring from the past seven-ish days (or so).

// It’s time to level up your graph game: Query, explore, edit, and visualize your connected data with the gdotv graph IDE. Try it out with a 1-month, no-fuss free trial.

Let’s dive into this week’s edition.

[Milestone:] The Semantic Web Turns 25

The year: 2001. The odyssey we didn’t know we were about to embark on: The Semantic Web.

On May 1, 2001, Tim Berners-Lee, James Hendler, and Ora Lassila published what would become one of the most cited – and most debated – papers in the history of the web. In their landmark Scientific American article, the trio laid out a vision for a web that wasn’t just for humans to read, but for machines to understand: a structured layer of meaning built on RDF, ontologies, and logic that would enable software agents to navigate the web, reason across data sources, and carry out complex tasks on our behalf.

The paper’s opening scene depicts an AI agent autonomously scheduling medical appointments by negotiating between calendars, insurance plans, and provider directories – a scenario that reads less like 2001 speculation and more like a 2026 product roadmap. For years, the Semantic Web vision was dismissed as too academic, too idealistic, and too slow. And yet it kept building. Knowledge graphs, linked data, RDF, OWL, SPARQL – the foundational concepts from that paper are now deeply embedded in modern AI infrastructure, more relevant today than ever.

Twenty-five years on, at the Knowledge Graph Conference 2026, all three co-authors were recognized with Lifetime Achievement Awards – the conference’s first-ever triple winners. Ora and Jim accepted the award in person; Sir Tim joined via Zoom. Everyone in the room agreed the recognition was 25 years overdue.

There is still so much work to be done toward the original vision of the Semantic Web – including the graph technology to help make it happen – but this is a milestone worth noting.

[Release:] Ultipa GQLDB: The First 100% ISO GQL Graph Database

If you’ve been waiting for a graph database that fully implements the ISO GQL standard rather than just nodding in its direction, Ultipa GQLDB will keep you waiting no more. In an announcement on LinkedIn, Ultipa CEO Ricky Sun shared that GQLDB represents v6 of Ultipa’s real-time graph database and the world’s first 100% ISO/IEC 39075-compliant graph database.

Moving past the release headline, GQLDB includes natural language to GQL translation via AI.GQL(), built-in vector embeddings, semantic search, and RAG support – all in one engine, no stitching required. It’s also 100-1000x faster than disk-based queries via in-memory graph computing, deploys as an embedded binary, standalone service, or distributed cluster, and includes built-in ontology support with OWL/RDF and SPARQL federation.

The Community Edition is completely free and installs in seconds as a single binary – no license friction, no cloud lock-in. To boot, gdotv supports working with Ultipa GQLDB, so you can query, explore, and visualize your ISO GQL graphs from day one.

//

[Watch:] Apache TinkerPop 4.0.0-beta.2 (et al) Post-Release Review

Release the Gremlins: This week’s watch is a technical deep-dive behind the three Apache TinkerPop™ releases covered by the Weekly Edge a few weeks back.

This post-release Contributorcast hosted by Cole Greer, Ken Hu, and Yang Xia walks you through the maintenance releases (3.7.6 and 3.8.1) – including the session connection reuse that nearly doubled lightweight transaction throughput from 3.6 to 7.6 TPS – before diving into the headline act: 4.0.0-beta.2. All five GLVs are now migrated to HTTP, Gremlin lang replaces bytecode for traversal transport, transactions are back (Java only, experimental), JavaScript has been fully rewritten in TypeScript, and the Gremlin MCP now works offline without a running Gremlin server.

The contributors also drop hints about what’s coming in the full TinkerPop 4.0 GA: graph schema interfaces and a GQL-style match step rework. If you’re a Gremlin – or if you’re just working in the TinkerPop ecosystem – this one’s worth catching up with.

[Guide:] Company-Wise Memory in Amazon Bedrock with AWS Neptune & Mem0

Enterprise AI chatbots have a memory problem. They can hold a conversation but can’t hold organizational context across sessions or customers.

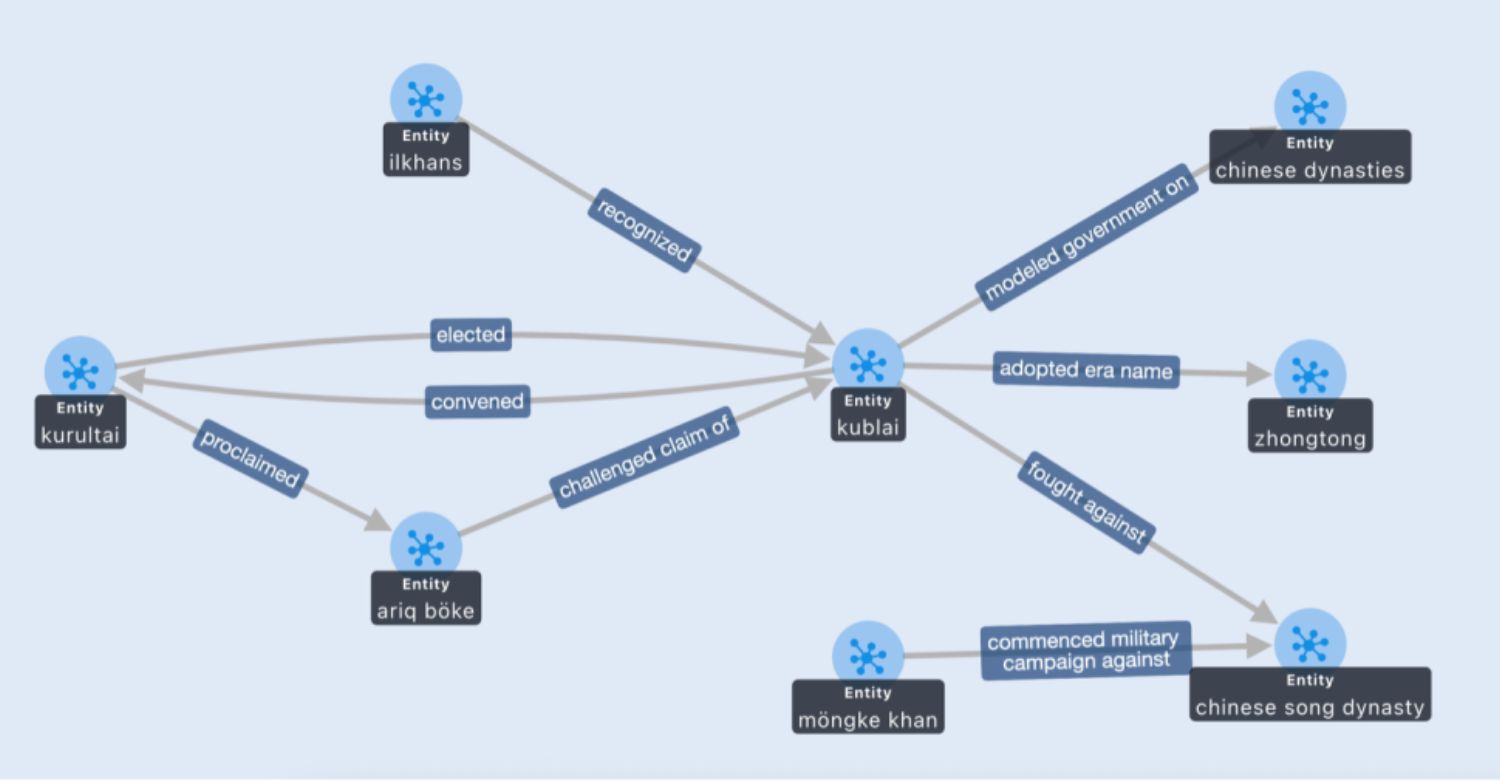

This practical guide from Shawn Tsai, Ray Wang, and Zhihao Lin on the AWS Machine Learning Blog shows how TrendMicro solved that AI memory challenge for their Companion chatbot using a three-layer architecture: AWS Neptune stores a company-specific knowledge graph of entity relationships, Mem0 handles short-term conversational memory and long-term persistence across sessions, and Amazon Bedrock orchestrates the whole thing.

The dual retrieval approach – semantic search via OpenSearch plus structured entity queries via Neptune – means the chatbot can ground its answers in verified organizational knowledge rather than generating vague, generic responses. There’s also a human-in-the-loop feedback mechanism where users can approve or reject memory mappings, so only validated knowledge persists.

[Read:] Towards Multimodal Knowledge Graphs with Lance Graph

A graph database, a vector store, and a data lakehouse all walk into a bar. Lance Graph walks in after them and says, “Don’t worry, I’ve already got a table for all three.”

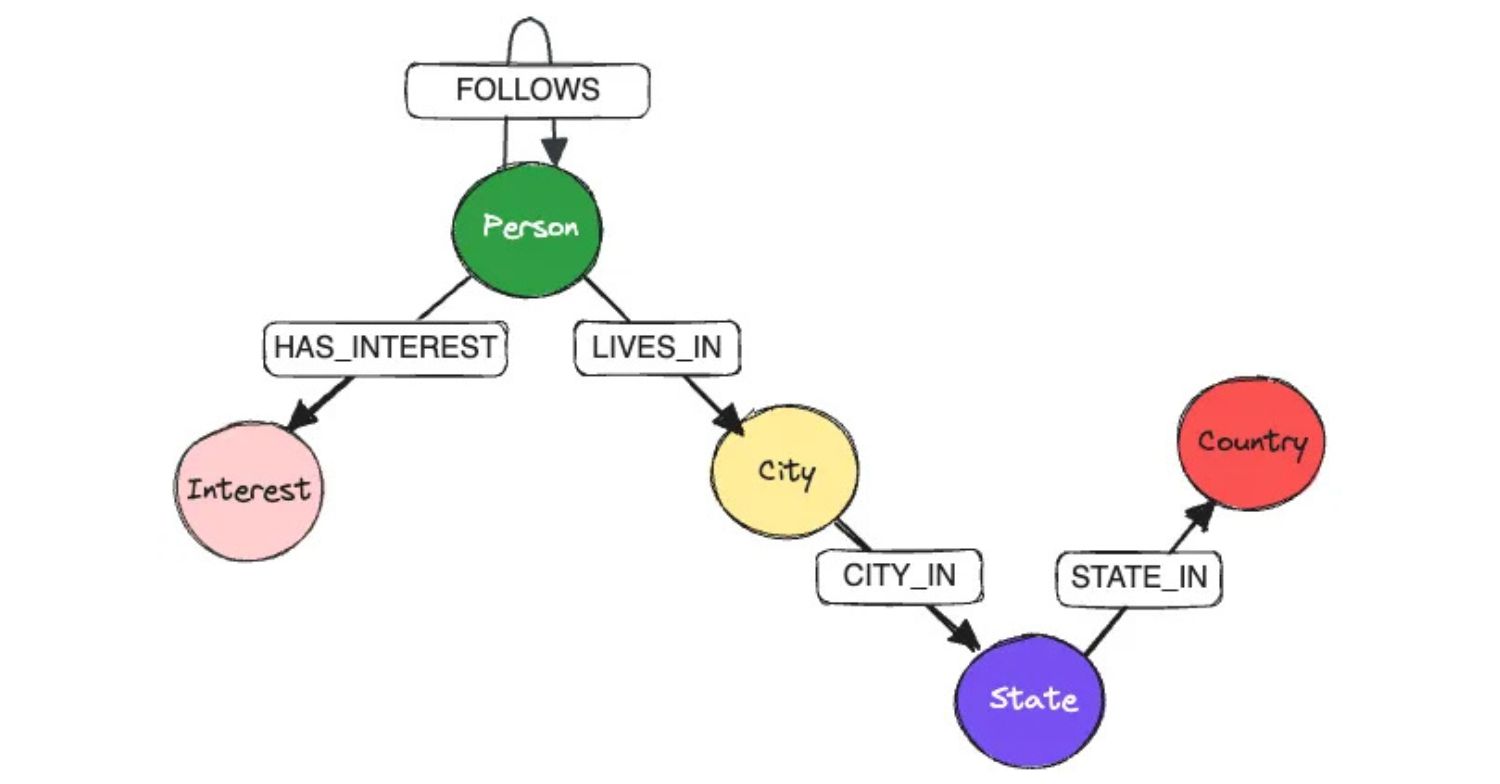

In this week’s read, graph tech sage Prashanth Rao walks you through Lance Graph, a Cypher-queryable graph query engine built on top of the Lance columnar format and Apache DataFusion.

The core idea: nodes and edges are just tables in LanceDB. Graph structure, scalar properties, image bytes, and vector embeddings all coexist in the same storage layer. Cypher handles the traversal, DataFusion handles execution, and vector search plugs in alongside it all for multimodal ranking – it’s giving “graph lakehouse,” essentially.

Prashanth then benchmarks Lance Graph against Neo4j, Kuzu, and LadybugDB with genuinely competitive results and makes a compelling case for where graph infrastructure is headed next.

(If you’re squinting at this wondering what the heck LanceDB even is, this GraphGeeks interview with LanceDB CEO Chang She is the fastest way to get up to speed.)

P.S. The first-ever GraphCon has just been announced! If you’re a postscript reader, this is your chance to snag a ticket before I promote it to the normies next week. 🦸🏽

P.P.S. The gdotv team just got back from the Knowledge Graph Conference 2026, and wow, do we have thoughts to share. 🤯

P.P.P.S. Got an item to nominate for the next edition of the Weekly Edge? Hit me up at weeklyedge@gdotv.com. ✍🏽